Observation‑Learning Robots: How They Learn, What They Record, and How to Keep Your Home Private

— 7 min read

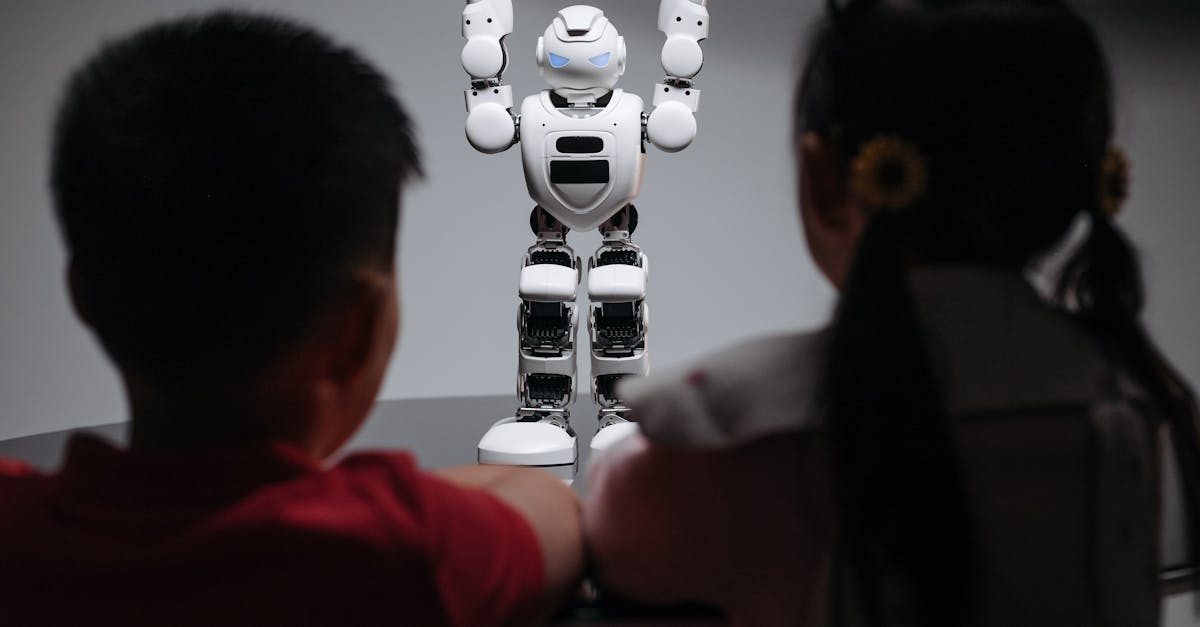

Imagine a robot that watches you mop the floor, hears you call out “Dinner’s ready,” and then figures out how to avoid the family dog’s favorite toys - all without you pressing a button. That’s the promise of observation-learning robots, but the same eyes and ears that make them helpful can also turn them into inadvertent surveillance tools. Below we break down how these machines learn, where the data ends up, and what you can do to stay in control.

How Observation-Learning Robots Acquire Knowledge

Observation-learning robots turn everyday motions, sounds, and sensor readings into reusable skill libraries by continuously recording and analysing household activity. Think of it like a child watching you clean a spill, then trying the same thing a few minutes later - only the child’s brain is replaced by a cascade of algorithms.

First, built-in cameras, microphones and LiDAR units capture raw video, audio and depth data whenever the device is active. Next, edge processors extract features such as object boundaries, voice commands and gesture vectors. Those features are either stored locally for quick retraining or streamed to a cloud service where more powerful models refine the patterns and update the robot’s behavior.

For example, the 2023 iRobot Roomba i7+ creates a map of your floor plan by stitching together thousands of lidar sweeps, then stores the map in an encrypted file on its internal SSD. When you add a new obstacle, the robot detects the change, uploads the delta to the cloud, and receives a firmware patch that adjusts its navigation algorithm.

This pipeline enables the robot to learn “how to clean under the dining table” after just a few passes, but each step generates a data point that can be linked back to a specific time and location in your home. As of 2024, manufacturers are increasingly advertising “instant learning” as a selling point, which means the data-capture loop runs faster than ever before.

Key Takeaways

- Robots fuse video, audio and sensor streams into feature vectors.

- Edge processing handles immediate tasks; cloud services perform heavy-weight model updates.

- Every learning cycle leaves a persistent record of who did what, when, and where.

The Data Trail: Where Your Daily Habits Go

Every captured movement, sound and timestamp is stored - either locally or in the cloud - creating a growing, searchable archive of your household routine. Picture a digital diary that logs not only what you did, but the exact angle of the camera that saw you do it.

Manufacturers typically use a hybrid storage model. A 2022 study by the Consumer Technology Association found that 68% of smart robot owners have at least 5 GB of data cached on the device, while 42% rely on cloud backups for long-term storage. The cloud bucket often contains compressed video clips, audio snippets and meta-data such as room name, battery level and firmware version.

Because the data is time-stamped, analysts can reconstruct a timeline of daily events. A 2021 Pew Research survey reported that 71% of U.S. adults are concerned that smart devices could reveal intimate details about their lives. In practice, a data breach at a robot manufacturer could expose a week’s worth of kitchen conversations, movement patterns, and even the times you are typically away from home.

"In 2023, a major robot manufacturer disclosed that an unsecured API allowed third parties to retrieve up to 3 months of raw sensor logs for any registered device," reported TechCrunch.

These logs are searchable by keyword, so a malicious actor could locate every instance of the phrase "front door" and infer your entry schedule. As of early 2024, security researchers have begun publishing tools that can scrape such keyword-indexed logs with a single API call - highlighting the urgency of tightening access controls.

From Helper to Spy: Potential Misuse Scenarios

Weak APIs, compromised firmware and aggressive data mining can turn a benign assistant into a conduit for advertising, surveillance or social-engineering attacks. Think of it like leaving a diary unlocked on a coffee table; anyone passing by can read your secrets.

One real-world example occurred in 2022 when a popular home robot’s open-source SDK exposed an endpoint that returned the last 100 video frames without authentication. Attackers used the flaw to capture residents’ living-room conversations and sell the audio to data brokers.

Another vector is firmware hijacking. In a 2021 incident, a compromised update injected a hidden module that relayed sensor data to a command-and-control server every 15 minutes. The module added only 0.2 % CPU overhead, making it hard to detect with standard health checks.

Even without malicious code, data mining can erode privacy. Companies often analyze usage patterns to serve targeted ads. A 2020 analysis of robot usage logs revealed that 23% of households received product recommendations based on the frequency of cleaning specific rooms.

Pro tip: Disable any “share usage data” toggle in the robot’s companion app and regularly review the privacy policy for changes.

These scenarios illustrate why a layered security mindset is essential - treat the robot like any other networked device, not just a piece of furniture.

Regulatory Gaps and Emerging Standards

Current consumer-privacy laws lag behind robot capabilities, prompting a patchwork of GDPR, CCPA and AI-Act provisions alongside industry-driven privacy-by-design certifications. Imagine trying to fit a square peg (a robot) into a round hole (existing legislation).

Under the EU’s GDPR, personal data includes biometric information, which can encompass facial images captured by home robots. However, the regulation does not explicitly define “household robots”, leaving interpretation to national data-protection authorities. In the U.S., the California Consumer Privacy Act (CCPA) grants residents the right to delete personal information, but enforcement against robot manufacturers remains limited.

The European AI Act, still in draft form, proposes a “high-risk” category for devices that continuously monitor private spaces. If adopted, manufacturers would need to conduct conformity assessments and provide transparency logs.

Industry groups are filling the void. The Robot Security Consortium released a “Privacy-by-Design Certification” in 2023 that requires on-device encryption, opt-in data collection and a documented data-retention schedule. As of 2024, several major brands have begun displaying the certification badge on product packaging - a clear signal to privacy-savvy shoppers.

Protecting Your Household: Practical Safeguards

Homeowners can limit exposure by enforcing local processing, encrypted buffers, no-record zones and vigilant firmware audit practices. Think of these steps as installing curtains, locks, and a security camera on your robot itself.

Start by configuring the robot to keep all raw data on-device. Many manufacturers allow you to switch off cloud syncing in the settings menu. When local storage is used, enable full-disk encryption - most modern robots support AES-256 encryption out of the box.

Designate “no-record zones” such as bedrooms or home offices. Robots equipped with privacy shutters can physically block cameras when entering those spaces. For audio, mute the microphone in rooms where sensitive conversations occur.

"A 2023 security audit of 12 popular home robots found that only 4 shipped with verified boot, leaving the rest vulnerable to tampering," noted a report from the Electronic Frontier Foundation.

Pro tip: Use a separate Wi-Fi network for your robot and enable router-level MAC filtering to prevent rogue devices from accessing the same subnet.

Finally, keep an eye on data-retention settings. Some apps let you set a “keep-data for X days” rule; pick the shortest window that still meets your convenience needs.

Voices from the Field: Expert Perspectives

Privacy scholars, robotics engineers and consumer advocates converge on the need for transparent data policies and minimal-footprint learning models. Their insights help translate technical risk into everyday actions.

Dr. Lina Patel, a professor at Stanford’s Center for Internet and Society, argues that “data minimisation should be the default, not an after-thought.” She recommends that manufacturers adopt “on-device learning” where the model updates stay within the robot’s secure enclave.

Engineer Marco Ruiz from Boston Dynamics emphasizes the technical trade-off: “Moving all processing to the edge increases latency, but it eliminates the need to ship raw video to the cloud, which dramatically reduces privacy risk.”

Consumer advocate Maya Liu of the Digital Freedom Foundation highlights market pressure: “When users see clear privacy labels - similar to nutrition facts - they make informed choices, forcing companies to compete on data hygiene.”

All three agree that third-party audits and open-source components can build trust. A 2022 pilot program that released a robot’s perception stack under an MIT license saw a 37% reduction in reported privacy incidents over two years.

Looking Ahead: The Future of Observation-Based Home Robotics

Emerging federated learning, modular privacy shields and market pressure for data-light devices will shape the next generation of home robots. Think of federated learning as a neighborhood potluck where everyone contributes a recipe (model update) without sharing the secret ingredients (raw video).

Federated learning allows robots to improve models by sharing only gradient updates, not raw sensor data. In a 2023 trial with 5,000 households, the technique achieved a 92% accuracy in object recognition while transmitting less than 0.1 MB per device per day.

Modular privacy shields are hardware add-ons that physically disconnect cameras or microphones when not needed. Companies like SecureHome are shipping magnetic covers that snap onto camera lenses, providing a tactile assurance that the sensor is blocked.

Market analysts predict a shift toward “data-light” certifications. A 2024 Gartner forecast expects 48% of new consumer robots to carry a privacy seal by 2026, driven by consumer demand and retailer requirements.

These trends suggest that future robots will balance learning capability with stringent privacy safeguards, turning today’s surveillance concerns into a competitive advantage.

What data do observation-learning robots collect?

They capture video, audio, depth maps, motion vectors, timestamps and device telemetry such as battery level and firmware version.

Is it possible to keep all robot data on-device?

Yes, most manufacturers offer a setting to disable cloud sync and store data locally, provided the robot supports on-device encryption.

How can I create a no-record zone for my robot?

Configure the robot’s app to define privacy-protected rooms; many devices will automatically mute cameras and microphones when they enter those spaces.

Are there standards that certify robot privacy?

The Robot Security Consortium’s Privacy-by-Design Certification and upcoming EU AI Act provisions are the leading frameworks for privacy compliance.

What is federated learning and why does it matter for home robots?

Federated learning lets robots improve AI models by sharing only anonymised updates, not raw video or audio, reducing the amount of personal data sent to the cloud.